Is AI in Writing a Problem?

Mechanical Skills Change, But the Fundamental Art Doesn't

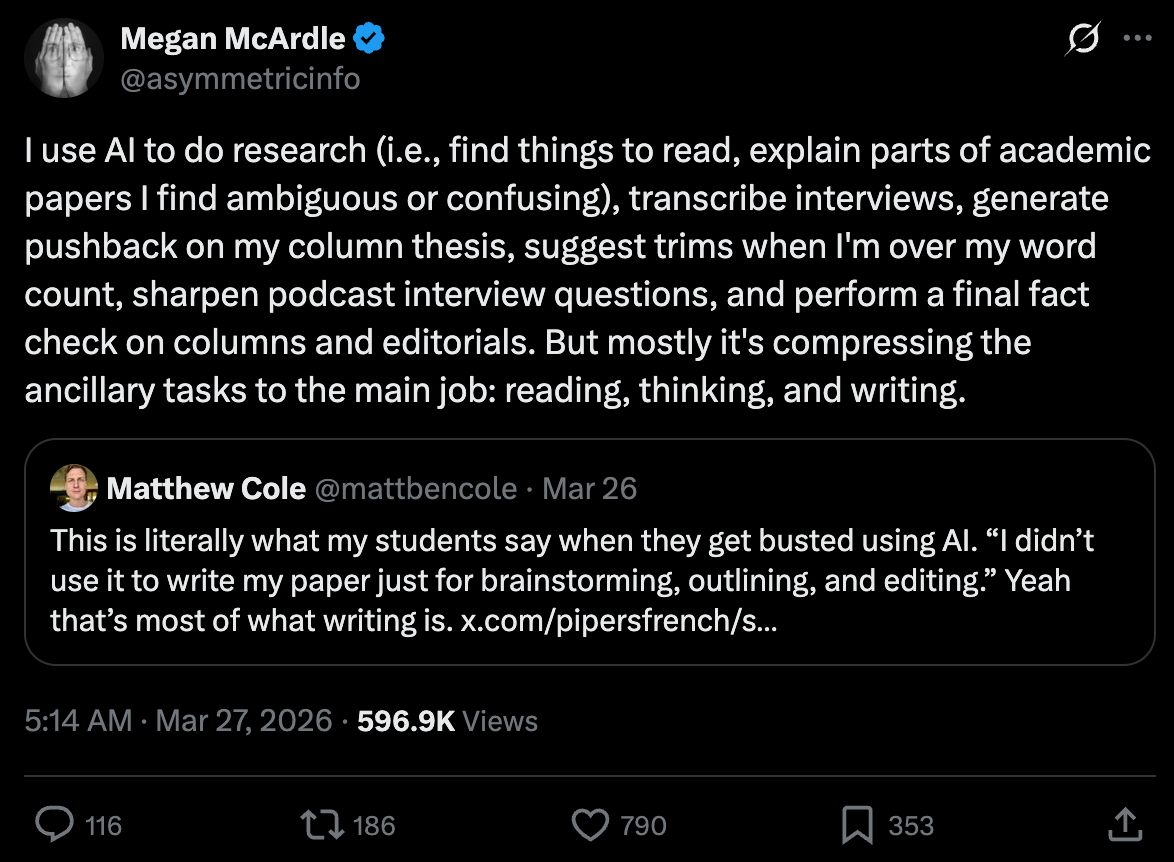

Megan McArdle, a columnist at The Washington Post, shared her AI workflow on X.

Basically, it’s a research assistant and copyeditor. While she doesn’t have a human research assistant, her job at The Washington Post obviously provides a human editor and copyeditor. It doesn’t seem too crazy to me—she doesn’t even seem to generate any text that goes into her column at all.

Of course, the Internet went bonkers.

Geez.

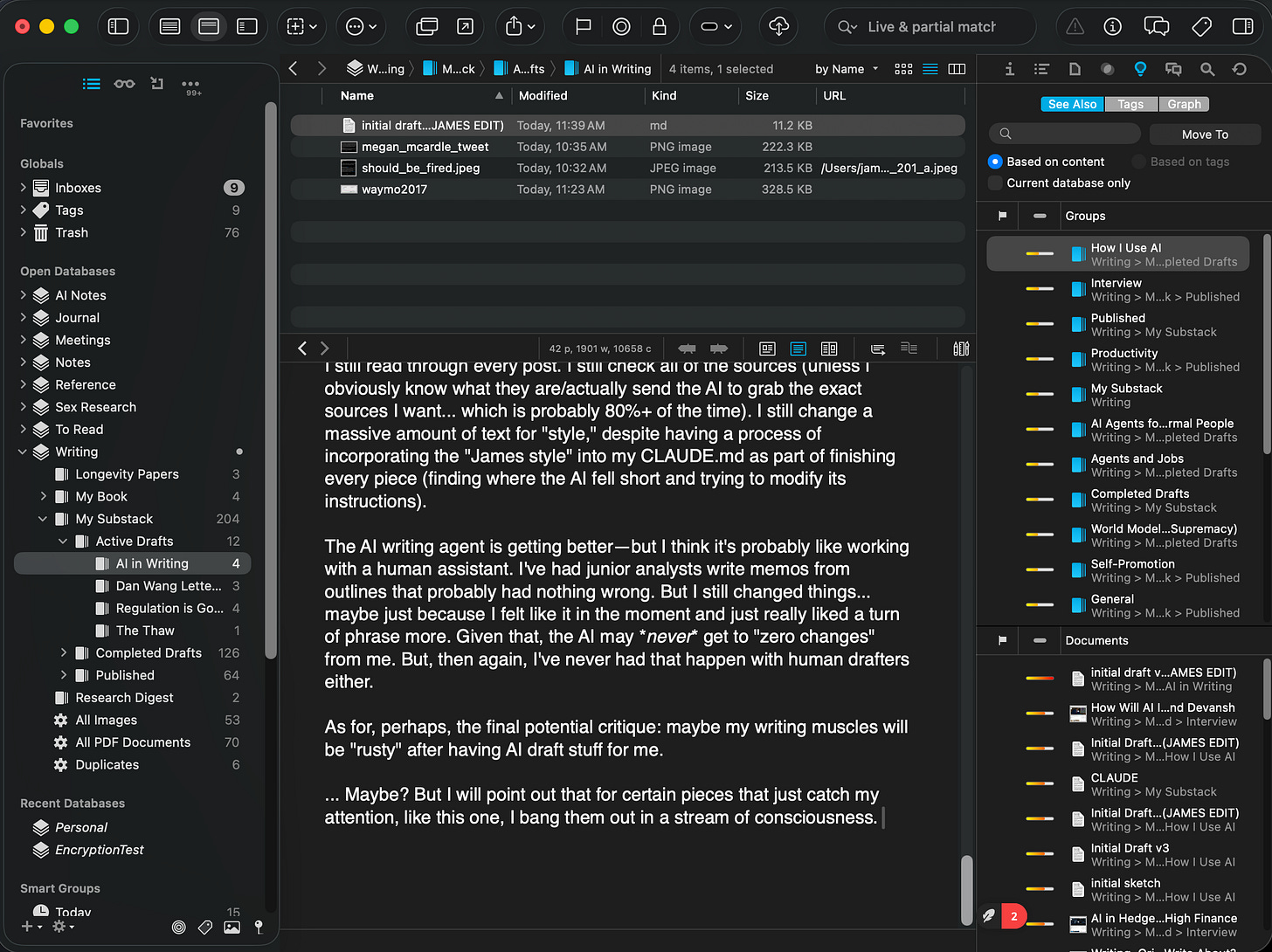

I’ve been open about how I use AI for writing. I even showed the entire outline, editing, etc. process with AI in my article about agents just to show what the AI can and cannot do.

The short summary of how I use it: I have ultra-detailed bullet outlines (often with literal turns of phrases I want to be incorporated), and I ask the AI to try to clean it up into an actual piece. It also grabs links/sources when I cite something but don’t actually link it. It’s basically doing what I would trust a student intern to do.

And then obviously I read and edit it. In that case, I have four rounds of edits, including two where I literally go in and change a LOT of text. It’s not really uncommon for me to have 12+ rounds of edits at this point. Of course, I do ask it to fact-check as well (I actually ping-pong fact-checks between Claude and ChatGPT to check each other). And guess what, sometimes I disagree with both of them or catch things that neither do!

Is that a problem? Well, perhaps if you uncritically rely on AI to just be “perfect” and don’t read the work. But I never totally trust even human editors and copyeditors.

I had a world-class team of editors for my book, and I still had to read what they did carefully. They missed some things. They introduced errors (due to lack of subject matter knowledge). They sometimes mangled AI-industry phrasing (like turning “compute” into “computational power” everywhere).

Questions About Authorship

I’m using AI more extensively than Megan McArdle is. But I’d assert that it’s unquestionably my writing—just as a human-edited article still is, when I’m on the byline. I ultimately need to approve of whatever goes out. More than that, I need to make it my own.

(As a note, major publications sometimes have weird processes around this, especially for “final edits” where the writer might NOT actually see it or the title/subtitle... which ironically makes the “but it’s still my work” case weaker for human-edited pieces than AI-edited ones, but this is still 80/20 true.)

I give examples in my book, What You Need to Know About AI where:

Photographers should get the credit, not “Kodak” or “Canon”

Some well-known artists like Andy Warhol (Campbell Soup can guy from the 1960s)—who often had assistants make their work and didn’t actually touch it themselves—are still credited (I think rightly) with their art pieces

Death of an Author by novelist and journalist Stephen Marche, an “AI-generated” novella published in 2023, which plays a prominent role in one of my book’s chapters. Summary is that he used AI—mostly ChatGPT—to generate it (”95%” of the words)... but still wrote the plot himself and basically kept mashing “re-generate” until he got what he wanted. I argue pretty strenuously that it’s pretty hard to accept the prior two examples but not this as “authorship.”

I think photography is especially instructive. It changed the nature of “art” significantly at the turn of the century—and arguably unleashed one of the most creative artistic periods in history. Cubism/Picasso, Impressionism/Monet... all of it came about in part because the mechanical skill of merely capturing the scene in front of you was less interesting when a “machine” (cameras) could do it.

But not only did art evolve, photography itself became an art form. I think you’d find very few people who’d deny photographers their due as artistic creatives in their own right.

Hallucinations?

The other major critique that Megan McArdle got was that fact-checks and paper summaries would be terrible, because the AI would just “hallucinate” all over the place. On the Central Air Podcast with Josh Barro, Megan McArdle, and Ben Dreyfuss, she pushed back on people calling them “stochastic parrots”—implying the label no longer applies.

Except... it does. If you look under the hood, they are statistical models that try to match a plausible conversation you’d have given what you say. I get her frustration, but the term itself is not the problem. The problem is people using it as shorthand for “useless.” After all, as we’ve seen, it’s very much not!

Alejandro Piad Morffis describes this better than I do. They ultimately just do things “within their dataset.” As per Logan Thorneloe, ML engineer at Google:

They have a lot of data and post-training these days, so they are actually fairly good. They will never be perfect, though—that’s part of the entire tradeoff of the way we’ve built modern deep learning AI/agents (another plug for my book: I explain why it’s impossible to totally banish “hallucinations” without making current AI commercially useless... like the AI from the 1980s).

Funny enough, I did not use AI in any appreciable fashion for writing my book. One is that my publisher (and major platforms like Ingram) ban AI in writing. I had to certify that I didn’t use AI in order to publish it. So, it was already a strikeout there, but I could have used it like McArdle. Research assistant. Fact-checks. Nothing against the rules about that. But I didn’t. Why?

Well, it wasn’t because I have a phobia of AI. After all, I was writing a whole book about how it works. No, it was because back then hallucinations were pretty bad. This wasn’t that long ago. We’re talking about late 2024 and early 2025! But especially at the beginning of my book process, anytime I tried to get AI to help me grab papers or research, it’d hallucinate like crazy!

Half of the links for papers or articles wouldn’t work—because the url was made up. The other half? Usually I’d click through and it wouldn’t be what it was described as at all. With a research assistant like that, well, I don’t think it’s a mystery why I shelved trying to use AI to do any research for me, let alone fact-check.

Given my own experience, I’m not too surprised that some people think using AI like McArdle is insane. Times have changed, though.

Again, human research assistants and fact-checkers also make mistakes. But I’d assert the reason why AI doing this felt so useless and terrible was it made mistakes no human would ever make.

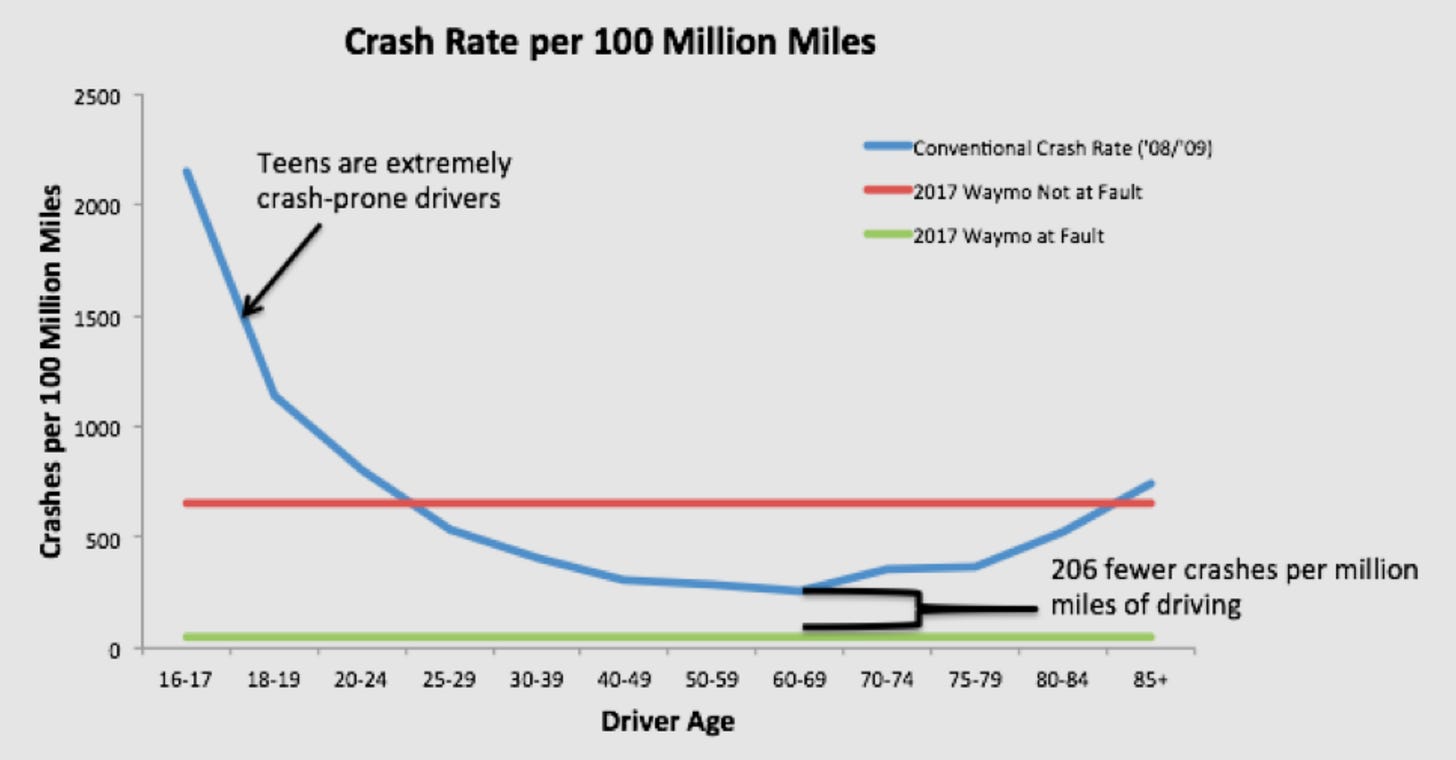

This is the same problem that self-driving cars keep running into.

Waymos (and most major autonomous platforms) have been safer than humans for a while. The above chart is from RMI on Waymo in 2017. While I might have looked with some skepticism back then at hitting exceptional/novel conditions, Waymos have only gotten safer since, and it’s hard to argue at this point that they’re worse than the average human driver—who is pretty terrible.

The problem, even now?

Self-driving cars sometimes make mistakes that no human driver would. They get confused in circumstances that they have never encountered (...outside their dataset...). They require intervention when some of the decision process gets complicated. They easily get trapped by malicious people doing things like throwing cones on them (regardless of your stance on them, I think terrifying people riding in them is not great—and ironically, those people only feel safe to do so because they expect the self-driving cars to properly stop instead of run them over!).

Since 2024, the result of a massive amount of post-training, harnesses, and tools by the frontier labs is that LLMs not only fail more predictably (e.g., modern events like Claude getting very confused when I was writing about the Iran conflict), but they also fail in more humanlike ways.

They slightly misinterpret studies. They get overly conservative about the interpretation of certain data. They miss studies that would change the conclusion quite a bit...

Basically, they have failure modes very similar to working with human assistants. The benefit of a huge amount of money, RLHF (Reinforcement Learning Human Feedback), and just sheer work put into them is that they’ve been tuned to behave in this way. AVs (autonomous vehicles) do not have the kind of volume of data and freedom to do so, though I’m sure the companies are trying their best to do the same thing.

That, however, makes it far easier for a human that doesn’t just dedicate their life to using AI to actually use it effectively. The human can predict how the model will fail. And that makes errors—hallucinations—acceptable and something that can be worked around, instead of being a showstopper like it was a mere few years ago.

My Writing Process in the Future?

I’m working on another book (well, proposal and research anyway). I’ll share more about it over time, but one obvious question from this is:

Will I be using AI to write my next book?

Well, unless publisher contracts and Ingram policies change, no—just purely from a requirement perspective. However, the most valuable use of AI for me these days is actually the same as McArdle. I get to utilize a process that feels a bit more like having a research and editing team—even if I don’t and will never find it economical to have one for, say, Substack posts.

I still read through every post. I still check all of the sources (unless I obviously know what they are/actually send the AI to grab the exact sources I want... which is probably 80%+ of the time). I still change a massive amount of text for “style,” despite having a process of incorporating the “James style” into my CLAUDE.md as part of finishing every piece (finding where the AI fell short and trying to modify its instructions).

The AI writing agent is getting better—but I think it’s probably like working with a human assistant. I’ve had junior analysts write memos from outlines that probably had nothing wrong. But I still changed things... maybe just because I felt like it in the moment and just really liked a turn of phrase more. Given that, the AI may never get to “zero changes” from me. But, then again, I’ve never had that happen with human drafters either.

The Future of Writing

As for, perhaps, the final potential critique: maybe my writing muscles will be “rusty” after having AI draft stuff for me.

... Maybe? But I will point out that for certain pieces that just catch my attention, like this one, I bang them out in a stream of consciousness. That’s how a lot of chapters in my book came together (... which also saw a lot of chapters get discarded wholesale as I moved stuff around).

Zero AI involvement.

Relative to my process/drafting from an “initial sketch” (detailed bullets) in my “How I Use AI Agents” piece... I one-shotted this article, basically! Screenshot as I’m writing this now. I’ll probably run this through fact-check and copyedit, but I expect it’ll largely be in the form it is now that came out of sitting down for about 45 minutes to bang this out.

Which even as I’m trying to push the boundaries of what I can do with AI—if nothing else, to understand it—I still end up often doing. Sometimes my “sketch”/outline to give to the AI… is basically my whole article. But so it is.

Honestly, if you enjoy writing, I don’t think having that kind of writing session is ever going away. We can take photographs now, but plenty of people still enjoy painting landscapes and portraits. Sure, fewer people can “make money” from it... but how many people ever did really make much money from it? There’s a reason art has had patrons for much of its history.

Writing, as per the Central Air Podcast episode, is also somewhat brutal with few, highly elite and coveted slots—which McArdle suggested might be part of the reason people are so angry, since there’s some jealousy and anger about the industry mixed in.

Just as fewer people can make money doing street art portraits (though, obviously, this still exists in limited fashion in, say, tourist areas), fewer people can make money with listicles and the classic human writing slop like “10 Reasons Why Your Cat Doesn’t Love You,” or whatever. But it’s not like people were ever making much money from that either...

In any case, will AI change writing? Probably. Though in the most artistic expressions of it, I expect it’ll probably elevate it. After all, if we all get used to reading AI summaries, elegant writing will suddenly stand out that much more—just as a Picasso is quite different than a photorealistic, well, photo. Not to do the classic, “and it’ll diversify the field!” but it will likely also help those who have trouble just typing words, either from physical or non-physical disabilities.

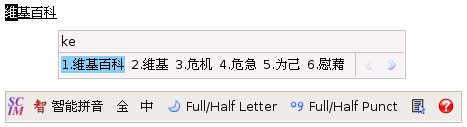

Just as we shifted from pen to typewriter to word processor (... which was decried for “losing something” even to this day), we will likely shift in how we produce words.1

However, it was always the case that we didn’t really care about the literal “shapes on the page” that happened to be how we convey meaning. The valuable part of writing has always been the expression and humanness—not the mere mechanical act of getting those words on the page.

As an aside, if you want a particularly fascinating version of this, 提笔忘字 in Chinese means “character amnesia,” referring to an ever-more-common scenario of not actually being able to write characters by hand, despite recognizing them. Why? A lot of Chinese is written on either keyboards or mobile devices with SCIM, which is like super-amazing autocomplete where you can often just write the first pinyin “letter” of each word in your sentence to write the whole thing. Is it bad? I don’t know, but it is a change in the relationship to words, mediated by technology. Chinese literature and language haven't collapsed, though.

Thanks for reading!

I hope you enjoyed this article. If you’d like to learn more about AI’s past, present, and future in an easy-to-understand way, I’ve published a book titled What You Need to Know About AI.

You can order the book on Amazon, Barnes & Noble, Bookshop, or pick up a copy in-person at a local bookstore.

One general comment I'll make myself—I think one thing I didn't cover in the piece is that the problem with AI writing, when done poorly, is asymmetry. Specifically, expecting the reader to give you time and attention... when you didn't spend much yourself. Ultimately, this is what characterizes "AI slop."

Utilizing AI in writing, when done poorly, does this. This is still new, and I'm experimenting. But I suspect that when done well, it should be indistinguishable or better than writing without AI. Why? You don't really do anything different in the craft—at least not for anything you actually release to the world. And, if anything, it does the "low differentiation" parts of linking news articles or cross-checking stats, freeing you up to spend more time on what actually makes your writing yours. For me, it certainly doesn't "save time" I need to spend on a piece. It mainly reallocates how I spend it.

One thing worth adding on the asymmetry is that it runs in the reader's head before they finish the first paragraph. Readers know AI can generate fluent prose, so they pre-filter — deciding whether you spent real effort before they invest theirs. Writers using AI well end up paying a trust tax generated by writers using it badly.

Which reframes "indistinguishable or better." The bar isn't output quality anymore, it's visible signals of effort — specificity, a point of view that couldn't have come from a generic prompt. AI makes the generic parts of writing cheap, which puts more weight on the parts only you can produce.