AI Agents and Jobs: The Human Bottleneck

AI Changes the Work, Not the Need for Judgment

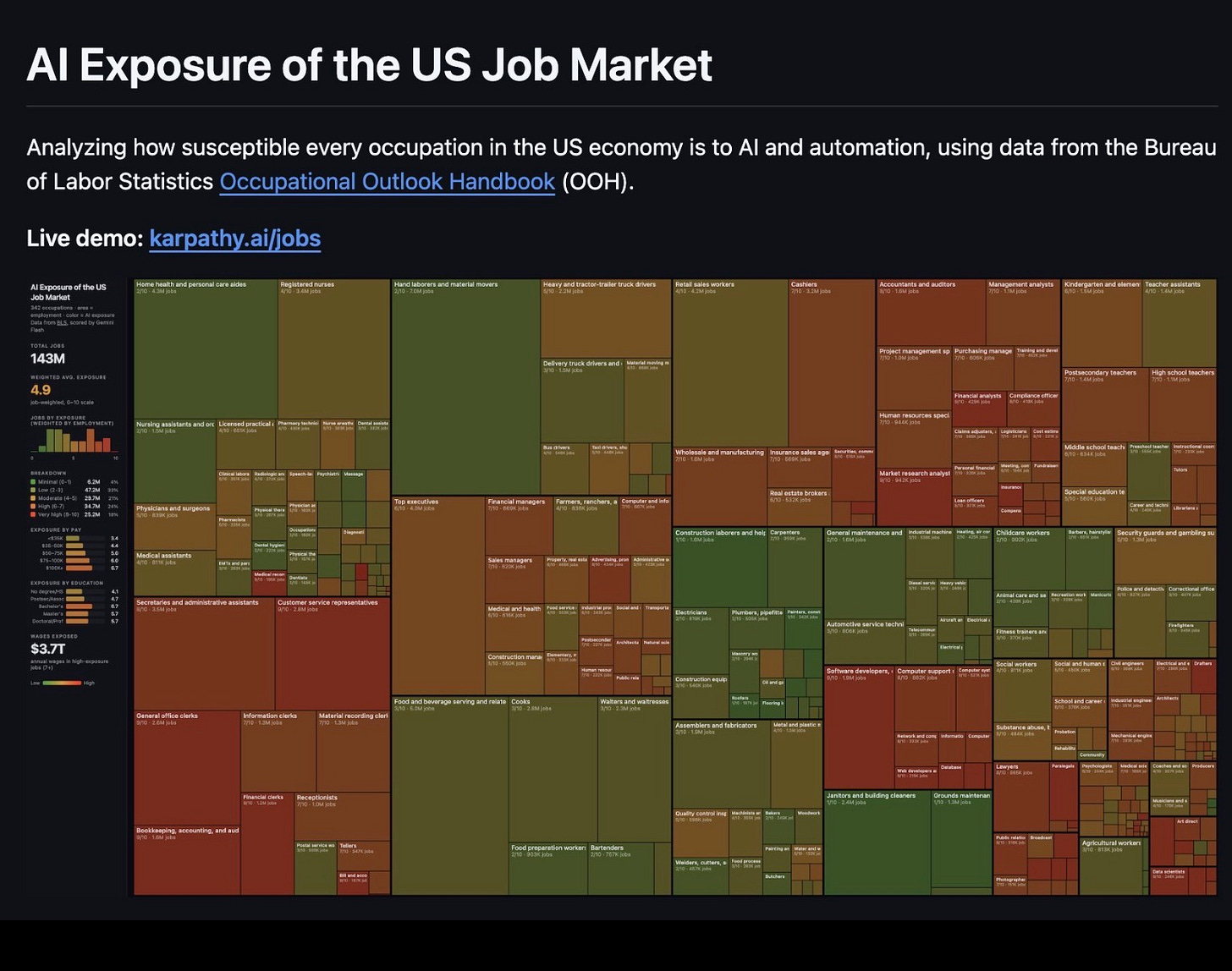

The narrative has shifted. Agents are now mainstream. People have been freaking out about Andrej Karpathy’s market map (karpathy.ai/jobs) that shows a huge portion of jobs are “exposed to AI.” Ben Thompson is now arguing he no longer thinks we’re in a bubble because agents are likely to generate huge value—and profits.

My article from last year, “The Boring Phase of AI,” was right about the impact of agents—but I guess people don’t find it so boring after all. I probably contributed somewhat to this trend with my article about how I personally use AI agents as well. As a note, since that article, my agent workflow has advanced so much that what I outlined there is fairly primitive to me now. What a difference a few weeks make in our current environment.

The big question on everyone’s mind, of course, is whether or not a huge portion of our population is now out of a job.

A Recent, Personal Case Study

I was recently onboarding a new analyst. She was quite patient with my, likely unreasonable, demands that she automate certain of our manual spreadsheets to incorporate new data and update their tables/charts all without human intervention.

Unreasonable. At least in part because her skills are not in software engineering. She was a good sport about it all, though. She was taking an introductory course in Python and offered to try to do what she could with VBA while trying to scramble up the massively steep curve I laid out for her. It’s a lot to ask, especially since, like most financial institutions, most of what we do runs on Excel.

I’m not a total monster, though. I said I understood but wanted to show her how I’d do it. I took the largest spreadsheet, dumped it into a new directory, opened Claude Code, and told it to break the spreadsheet up into distinct Data, Analyses/Logic, and View layers. I wanted it to prototype the charts/tables in HTML and clearly document how things were used... as well as what specific data was needed so we can automate pulling it from our financial database later. Then I hit enter.

From there, Claude Code was not particularly fast. It took around 30 minutes for it to finish while I showed the analyst what it was doing and explained why I asked for what I did. Software engineers among my readers probably recognize what is essentially MVC (Model-View-Controller). Experienced users of Claude Code will know that standalone HTML is the absolute easiest way for it to provide easy, interactive tables/charts right now. And infrastructure engineers understand exactly why I wanted the clear spec on what data was required.

Still, during that entire time, seeing it quickly sort through an Excel file that is painful to update—and can take hours to debug when something breaks or something new is required—and rapidly build everything... I think it was fair to say she was, uh, surprised. I think she expressed a sentiment along the lines of everyone who did her job at a bank likely losing it.

Anyway, when it finished, we walked through it. We quickly spotted immediate errors (... a blank chart is a pretty good giveaway), but it was pretty good as a one-shot attempt from Claude (Opus 4.6). Still, it never crossed my mind that we would lay people off or that I didn’t need the analyst anymore.

Yes, perhaps she doesn’t have the engineering background I do to know to ask my exact query. But she could easily recognize if the tables/charts were right and—later on, as we expand—what kinds of views are useful. She is able to add a layer of domain knowledge and human judgment. Claude Code and all the other agentic tools are... well... tools.

I want employees who use tools well and can take ownership (and apply judgment) to work. Not even more tools I’ll need to run myself.

Shifting What Skills are Important

In the past, the ability to build these Excel files—and even format them in pretty (and legible) ways—was extremely important. It wasn’t like that was the “highest value add” task for an expensive analyst in a hedge fund or Wall Street bank. That was just a necessary part of the job for those expensive analysts to actually get to the part where they add value.

That has changed.

In the particular case above, not only did I not really want the analyst working in Excel, I didn’t even particularly care about her finishing her Python course before jumping into things. In fact, as far as “necessary but not high value add” skills I wanted her to learn, I told her to learn how to navigate a command line (use ls, mv, touch, etc.), use a text editor, and use git to collaborate/”save” work.

I do want her to finish the course eventually. But she’s not building production software systems serving millions (or billions) of users that have six sigma uptime requirements. If it looks like it works... and it works... that’s likely sufficient for the job—or at least this part.

Ultimately, the skills and tools that are important are shifting... and quite rapidly. But that’s not the same as “people are no longer useful.”

We’ve automated certain mechanical aspects of digital work that was just required. Now, human judgment is a much higher percentage of time/value-add in the work product—at least in my experience here, but also the experience of many companies I’m talking to.

Productivity can mean higher earnings. In fact, it generally does. It can also mean fewer people are needed. That, of course, is what everyone is worried about.

The Market Map

Andrej Karpathy’s dataset obviously speaks to those fears. He scraped the Bureau of Labor Statistics’ Occupational Outlook Handbook—342 occupations spanning the entire US economy—and had an LLM score each one on AI exposure from 0 to 10. The results are an interactive treemap at karpathy.ai/jobs, where rectangle size represents employment and color represents exposure. Average exposure: 5.3. Medical transcriptionists score a perfect 10. Software developers land at 8–9. Roofers and plumbers sit at 0–1. If your work product is fundamentally digital, your exposure is high. Physical presence is a natural barrier.

I’ve talked about the physical world and data friction being a huge barrier to certain segments of AI, by the way. Here, here, this podcast, and an entire chapter in my book...

Still, think about it a bit. Follow the logic.

If you read those scores as “whose jobs will be eliminated,” the chart tells you top executives, software architects, and portfolio managers are the most screwed—and roofers and plumbers are sitting pretty.

Look, I have nothing against manual labor. But do you really believe the takeaway is that it’s better to be a manual laborer than a CEO? That programmers should retrain as bricklayers?

If that reading is right, then that’s going to be great right up until the point robots take over that physical work too, making humans entirely redundant. Sure, there are people who believe that. I’m willing to even say that can be a coherent position, but it requires assumptions about robotics and general intelligence that are significantly ahead of where the technology actually is.

What the scores actually show is whose jobs will change the most. High exposure means high disruption to how the work gets done—not that the work disappears.

Oracles and Skill Dispersion

I’ve written about my concept of oracles before. Borrowed from cryptography, where an oracle is a source of information that verifies if you’re on the right track, even tiny amounts of information can make a massive difference. This applies for human experts-in-the-loop working with AI.

That’s exactly what played out when I was showing the new analyst how to process the sheet. I was the oracle. I could tell it how to approach the problem. I could glance at the HTML output and immediately spot problems. Without a domain knowledge overlay, the output would have looked impressively complete and been subtly wrong in ways that matter.

So a financial analyst scoring 8 on Karpathy’s map doesn’t mean financial analysis stops being needed. It means pulling data, formatting reports, running standard models, and producing charts—the mechanical components—are highly susceptible to automation. And they are. I just demonstrated that with a spreadsheet. Still, the analyst is still needed. That analyst can just move much faster, and Excel ninjitsu is less valuable, especially versus good intuition and judgment. Again, changed, not destroyed.

That does raise a question. What happens to humans that aren’t experts? Say, young people or fresh graduates?

Humans-in-the-Loop—AKA, Human Bottlenecks

As said, I’ve advanced quite a bit with my AI agent setup.

I’ve been context-switching between 10–15 different agentic projects daily. All of them are bottlenecked on the same thing: having a human who can 1) take the initiative to start the project, 2) know what the actual output should look like, and 3) overlay domain knowledge or know when to have the agent fetch more context. That would be me in this case.

There are experiments in trying to take the human out of these agentic loops. StrongDM recently put out their version with a lot of PR—but the basic pattern holds regardless of tooling.

These three bottlenecks are the same bottleneck described three ways: judgment.

Which is exactly the skill I argued in “White-Collar Apocalypse” gets elevated rather than devalued by AI. The mechanical skills get cheaper. The judgment skills get more valuable.

Either way, I’ve been trying my hardest to take myself out of the loop. Relative to other people, I’m actively trying to put myself out of work. While I have been able to roll some of these agents up to be managed by higher-level agents (... ironic, I’ve created a corporate hierarchy from my agents), I still can’t take myself out.

If anything, it has steadily increased the pace of decisions I need to make, as it keeps increasing the pace of me getting stuff done.

But What About the Layoffs?

It’s worth being clear-eyed about what’s actually driving the layoff headlines. Companies like Block and Meta hired aggressively during the pandemic boom and are now trimming bloat—something tech does periodically regardless of the prevailing narrative. Previously, you’d hire McKinsey to come in and tell you to cut 15%. Now you can just cite “AI.” It’s a convenient story, but a lot of what’s being labeled as AI-driven displacement is really just cyclical correction with better PR.

What AI actually does is make high-performing, capable people even more individually valuable. That’s the oracle effect in practice. The senior analyst who can direct agentic workflows and evaluate their output is now doing what used to require a team. That doesn’t mean the team disappears—at least not everywhere. For my own organizations, which aren’t bloated to begin with, the freed time doesn’t mean we get rid of people. It means we can finally do the proactive work we never had bandwidth for—projects that were always worth doing but sat in the backlog because everyone was buried in mechanical tasks. The pie gets bigger.

The Pipeline Problem / Young Person Job Apocalypse?

I’ve talked about this plenty. This is an even better illustration.

Josh Blanchfield helpfully illustrated the point for me.

Yeah, bloated organizations will lay people off, but “old industry” tends to be conservative and slow regardless. They’ll adopt gradually, and the adjustment will be similarly gradual. New organizations?

Well, it’s not that AI is eliminating jobs. It’s that organizations, seeing the productivity gains from senior people using AI, will stop hiring juniors. It’s destroying job openings, even if jobs themselves aren’t being lost.

Interestingly, where do future oracles/experts come from? This generation isn’t going to be immortal. Junior employees spend years building domain knowledge, making mistakes, and developing the judgment that makes them effective evaluators of AI output. Cut off that pipeline and you’re consuming your seed corn.

Everyone obviously knows this, but it’s a prisoner’s dilemma. Someone needs to hire those young people and train them up. That’s expensive though. Someone needs to do it... but not us.

At the risk of being repetitive: AI automates the mechanical components of digital work. That raises the value of human judgment. But judgment is developed through years of apprenticeship. Which means the real medium-term risk isn’t mass replacement—it’s underinvestment in future experts. Unfortunately, I don’t really have a good answer for this. Where it’ll land is still “wait and see” for me.

Where This Leaves Us

I’ve been called an “AI skeptic”—which is not a label I use for myself. A Googler called me one at a recent book signing. The reality is, I’m neither a doomer nor an accelerationist. I don’t think we’re all going to die, and I don’t think AGI is around the corner. But I absolutely think AI is going to make massive changes in our economy.

There are great macro thinkers like my former colleague Paul Podolsky who called early that AI productivity is a bigger deal than most financial analysts think—and I agree.

I still believe we’ll eventually have a bubble pop, because that’s just the nature of new technologies. But, sure, as per Ben Thompson, it’s far less obvious now that one is imminent. There are real productivity gains showing up in the data. Things are going to change. Hell, things have already changed.

It isn’t likely to be the economic apocalypse that Citrini’s thought experiment envisioned—with mass white-collar unemployment and “Ghost GDP” from AI displacing all white-collar work by 2028. I’m still highly skeptical of that. But AI is going to change the nature of those jobs profoundly. And the question of who adapts well comes back to that same oracle layer.

My analyst will be fine. My teams will be too. There may be a lot of uncertainty and change... but there’s also a lot of opportunity to do far more as individuals than ever before. It’s similar to when software frameworks made coding a lot easier, the internet made distribution of software instant, and the “cloud” made it possible to run scaled compute infrastructure from a coffee shop. These also all brought down the barrier to entry for creating stuff (especially in startups). Agentic workflows are also a massive jump forward—as large or even larger.

Karpathy’s treemap shows the landscape of change. The oracle framework explains who navigates it well. Though the “pipeline question” (funny way of saying kids entering the workforce) does still worry me. I guess we’ll have to wait and see... though at the pace things are going now, it might not be that long a wait.

Thanks for reading!

I hope you enjoyed this article. If you’d like to learn more about AI’s past, present, and future in an easy-to-understand way, I’ve published a book titled What You Need to Know About AI.

You can order the book on Amazon, Barnes & Noble, Bookshop, or pick up a copy in-person at a local bookstore. If you’re in Southern California, I’ll be at Lido Village Books in Newport Beach, CA next Thursday 3/26 from 4-6pm signing books in person if you’re around and want to say hi!

Well don’t forget this your first (ish) iteration, what happens on your 100th.

From my experience, AI lacks common sense and yes still needs human interaction. It definitely increases our productivity and in jobs where individuals are overwhelmed and budgets are tight (think any government entity), definitely AI will increase efficiency without taking jobs.

As you’ve mentioned highly capable people will be safe. But what about the rest of the population, of which a majority are likely to be more on the mediocre/average side but still currently employed? I definitely think AI will take jobs, especially the young who haven’t learnt the skills to properly prompt/guide AI.

Balanced piece. If we take your worldview as “reasonable” then the implications are that “average is over” (as Tyler Cowen would put it) and we’ll see further bifurcation in the jobs market.